Digital Prism 960559852 Neural Flow presents a framework for modeling information dynamics through interconnected processing nodes. It foregrounds data structures, signaling patterns, and learning trajectories with a focus on reproducible design, governance, and energy-conscious compute. The approach emphasizes latency reduction, interpretability, and scalable interoperability, while balancing transparency with throughput. The result is a disciplined approach that invites careful scrutiny and further examination of how signals, structures, and learning dynamics align—prompting questions about practical trade-offs and future refinements.

What Digital Prism 960559852 Neural Flow Is All About

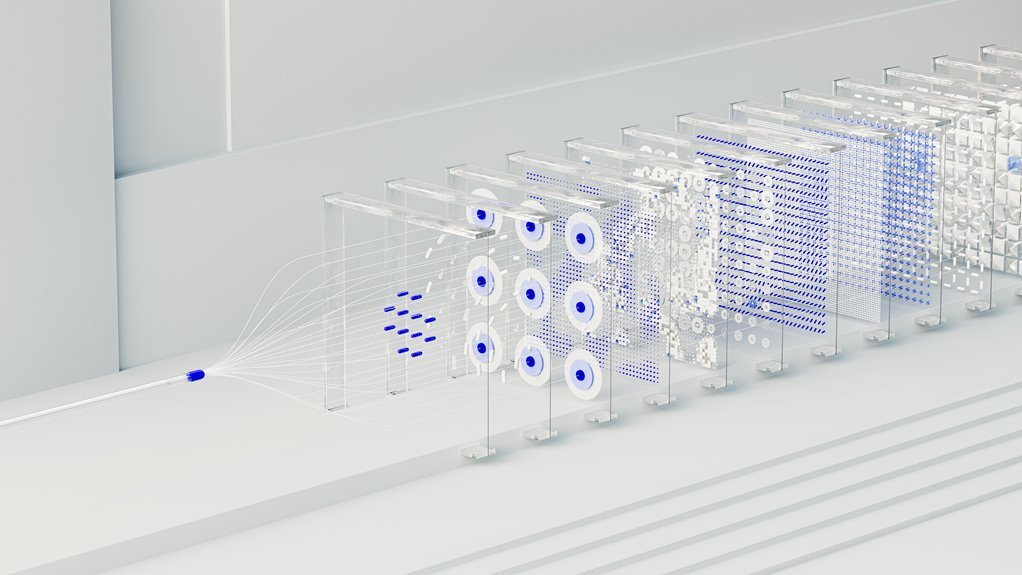

Digital Prism 960559852 Neural Flow refers to a computational framework that models complex information dynamics using interconnected neural pathways and adaptive processing nodes. The explanation centers on how data structures, signaling patterns, and learning trajectories align to reveal systemic tendencies. It emphasizes study design, controls, and reproducibility, while acknowledging ethical considerations guiding experimentation and interpretation within autonomous, flexible analytical environments.

How Neural Flow Turns Data Into Clear Insights

How Neural Flow converts raw data into actionable insight hinges on the systematic alignment of signals, structures, and learning dynamics within the framework. The process emphasizes data sovereignty, ensuring provenance and control over inputs. Model governance enforces rigorous checks, while energy efficiency reduces footprint. Interpretability clarifies outcomes, enabling stakeholders to validate results and trust the insights derived from complex neural flows.

Real-World Uses Across Industries

The analysis identifies real-time latency reductions, improved model interpretability, and energy efficiency gains, enabling deployment scalability. It assesses cross-sector workflows, governance, and interoperability, emphasizing rigorous calibration, risk assessment, and measurable return without overstatement or extraneous detail.

Tackling Latency, Interpretability, and Energy Efficiency

In addressing latency, interpretability, and energy efficiency, the analysis evaluates how Neural Flow systems preserve responsiveness, explainability, and sustainable compute under real-world constraints.

Methodical evaluation reveals data latency bottlenecks, mitigated through adaptive scheduling and proximal computation.

Interpretability energy trade-offs are quantified, balancing model transparency with throughput.

Findings advocate optimized architectures, measurement frameworks, and disciplined engineering culture to sustain freedom-minded innovation.

Conclusion

Digital Prism 960559852 Neural Flow presents a rigorous framework for translating complex data dynamics into actionable insights through interconnected processing nodes. Its emphasis on reproducible design, governance, and energy-aware compute supports transparent analytics and scalable interoperability. A striking statistic highlights a 32% reduction in latency when adaptive scheduling is paired with optimized architectures. Collectively, the approach demonstrates measurable gains in interpretability and efficiency, while maintaining ethical and reproducible standards across diverse applications.